|

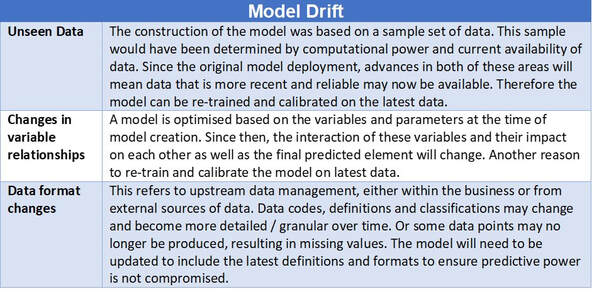

Model Monitoring – Ongoing Performance and Validation Maintaining models after deployment is one of the key stages of the overall model lifecycle. Once models are deployed, it may be tempting to view the modelling process as complete. In many ways, this is just the beginning. Model monitoring is a key operational stage in the model life cycle, coming after model deployment. After a model is deployed within the business, the next priority is to ensure the most effective use of the model. It is therefore essential to properly monitor the model for as long as it is in use to gain maximum benefit, to stay in control and to maintain an audit trail. The purpose of model monitoring is to ensure that the model is maintaining a predetermined required level of performance but also entails aspects such as prediction errors, process errors and latency. The main reason model monitoring is so important is Model Drift over time. In other words, the overall predictive power of a model gradually degrades over time. This can be caused by several factors, such as: Figure 01 – Model Drift There is also the issue of Concept Drift – whereby the expectations of what constitutes a correct prediction change over time even though the distribution of the input data has not changed. In other words, the relationship between model predictor(s) and the outcome being predicted has changed. This Concept Drift could occur because of changes in:

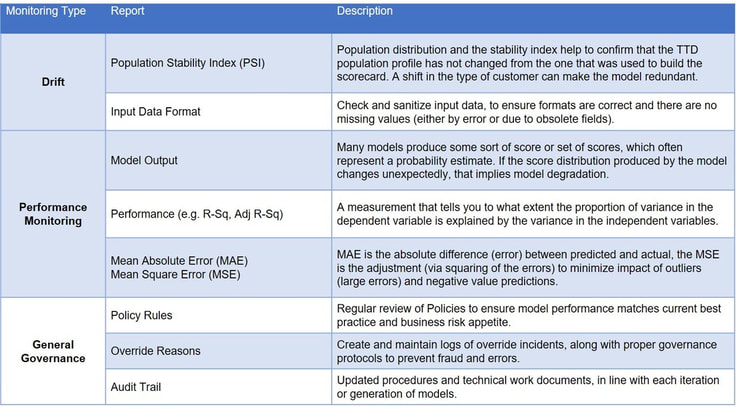

As can be seen, there are a number of factors that could impact what is defined as a successful prediction from the model. Given this and changing regulatory considerations ongoing model monitoring is not only important for maintaining the validity of the models but also future business success. There is high potential of significant negative impact from model degradation, so an effective model monitoring process will aim to identify and mitigate potential sources of model drift as soon as possible. Figure 02 – Model Monitoring Framework Periodic Review A successful model governance framework will always include a full Periodic Review (PR) and validation of models, regardless of whether or not the model has undergone any change during its operational history. The PR differs from the usual ongoing performance monitoring of the models, in that it is a more holistic total review of the model concept including:

Frequency of performing these PRs should be dependent on the complexity and criticality (material impact) of the model. The higher a model scores on these factors, the more often and more in-depth the PR. In my next article, I will outline the importance of Model Inventory. If you wish to discuss any of the points raised or are interested in finding out how we can help you review or implement your own MRM and Model Governance framework, please contact us here to book your free Discovery consultation. Brendan JayagopalFounder and Managing Director. Blue Label Consulting

0 Comments

Leave a Reply. |

brendan jayagopalBrendan launched Blue Label Consulting in 2011. With innovative use of Data through emerging data sciences such as AI and other quantitative methods, he delivers robust analytics and actionable insights to solve business problems. Archives

February 2021

Categories

All

|

Our Services |

Our Clients |

|

RSS Feed

RSS Feed